The Claude Mythos Controversy: A Wake-Up Call for Finance

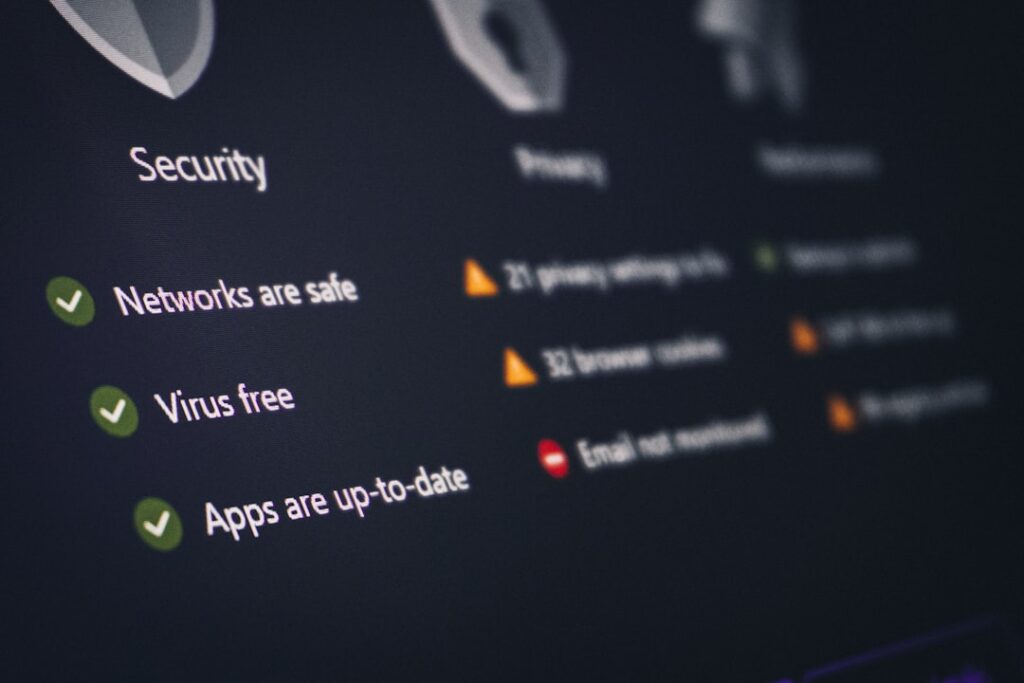

The artificial intelligence landscape just experienced a seismic shift, and Wall Street is paying close attention. Anthropic, the AI research company behind Claude, has made bold claims about its latest iteration—Claude Mythos—that have sent ripples of concern through the financial services industry. At the heart of the controversy lies a deceptively simple assertion: this AI tool can outperform human experts at certain hacking and cybersecurity tasks. For an industry built on the sanctity of digital security, these claims represent far more than mere technical trivia—they signal a fundamental challenge to established cybersecurity paradigms.

The financial sector’s anxiety is hardly surprising. Banks, investment firms, and fintech companies operate in an environment where security breaches can translate into billions of dollars in losses, reputational damage, and regulatory penalties. When a major AI company suggests that artificial intelligence might excel at the very activities that threaten these institutions, the natural response is concern bordering on alarm. This isn’t paranoia; it’s prudent risk assessment from an industry acutely aware of its vulnerabilities.

Understanding Claude Mythos and Its Capabilities

So what exactly is Claude Mythos, and why has it garnered such attention? Claude is Anthropic’s flagship large language model—essentially an AI system trained to understand and generate human language with remarkable sophistication. The Mythos iteration represents an advancement in this technology, with proponents claiming enhanced capabilities across numerous domains. The specific claim that has financial institutions worried pertains to its purported ability to assist with or execute hacking activities and cybersecurity penetration testing at levels that match or exceed human expertise.

This claim deserves careful unpacking. The assertion isn’t that Claude Mythos will spontaneously begin hacking into bank servers with malicious intent. Rather, the concern centers on the tool’s potential utility for bad actors who might use it to identify vulnerabilities, develop exploits, or execute sophisticated cyber attacks. If an AI system can identify security weaknesses as effectively as a top-tier penetration tester, it exponentially amplifies the threat landscape—not because of what the AI wants to do, but because of what others might convince it to do, or how they might use its capabilities.

The Financial Sector’s Legitimate Anxieties

The financial world’s trepidation isn’t unfounded. Modern financial infrastructure depends on increasingly complex networks of interconnected systems, each representing a potential point of vulnerability. Cybersecurity professionals working within banks and investment firms spend considerable resources identifying and patching weaknesses before malicious actors can exploit them. The introduction of a powerful AI tool capable of accelerating the discovery of vulnerabilities represents a genuine operational concern.

Consider the implications: if Claude Mythos can identify exploitable flaws in security systems faster than human experts can patch them, the traditional cat-and-mouse game between defenders and attackers shifts dramatically in favor of the attackers. Financial institutions pride themselves on their security posture, but an AI-assisted offensive capability could fundamentally alter the risk calculus. This isn’t theoretical speculation—it’s a straightforward assessment of how technological advantages redistribute power in any adversarial domain.

Separating Hype from Reality

That said, the conversation surrounding Claude Mythos deserves nuance. Technology enthusiasts and skeptics have offered differing interpretations of Anthropic’s claims. Some security experts question whether Claude Mythos truly represents the quantum leap in capability that recent headlines suggest. They point out that sophisticated hacking has long required not just technical knowledge but contextual understanding, social engineering acumen, and real-time adaptation—areas where AI systems, despite their improvements, still lag behind human operators.

Moreover, Anthropic itself has publicly emphasized its commitment to responsible AI development. The company has implemented safeguards and usage policies designed to prevent misuse of its tools. These aren’t perfect solutions, but they represent a genuine effort to balance innovation with safety considerations. The question, then, isn’t whether Claude Mythos poses risks—it clearly does—but rather whether those risks are being adequately managed and whether existing regulatory frameworks can keep pace with the technology.

Regulatory and Systemic Implications

The Claude Mythos controversy arrives at a pivotal moment for AI regulation. Policymakers globally are grappling with how to create frameworks that encourage innovation while mitigating catastrophic risks. Financial regulators, in particular, face mounting pressure to address cybersecurity as a systemic risk. If AI tools genuinely enhance offensive cyber capabilities, regulators must consider new requirements for financial institutions—stricter access controls, more frequent security audits, enhanced monitoring for suspicious activities, and potentially mandatory AI-specific security protocols.

The conversation also highlights a broader challenge in the AI era: the difficulty of keeping security measures ahead of offensive capabilities. Traditional cybersecurity operates on the assumption that defenders enjoy certain inherent advantages—they control the systems being protected and can implement improvements at will. AI-assisted attacks potentially erode these advantages by accelerating the discovery of vulnerabilities and enabling faster exploitation.

Looking Forward: Risk Management in the AI Age

As the financial industry processes these developments, several imperatives emerge. First, financial institutions must take seriously the possibility that their cybersecurity practices, while robust by historical standards, may require substantial enhancement to remain effective against AI-augmented threats. This isn’t a call for panic but rather for prudent risk management and proactive investment in next-generation security infrastructure.

Second, the tech industry must commit to transparency about AI capabilities and limitations. If Anthropic or other companies make claims about their systems’ abilities in sensitive domains like cybersecurity, those claims should be thoroughly documented and independently verified. The financial sector deserves clarity about what risks it actually faces.

Third, policymakers must accelerate their work on AI governance frameworks. The regulatory environment cannot afford to lag significantly behind technological capability. Whether through new regulations, industry standards, or international agreements, some mechanism must ensure that powerful AI tools are deployed responsibly and that their risks are proportionate to their benefits.

Claude Mythos represents a crossroads moment for AI deployment in sensitive sectors. The financial industry’s concerns are entirely legitimate, but they needn’t paralyze decision-making or stifle innovation. What’s required is clear-eyed assessment of both capabilities and risks, coupled with serious commitment to governance frameworks that can evolve as the technology does.

This report is based on information originally published by BBC News. Business News Wire has independently summarized this content. Read the original article.